- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

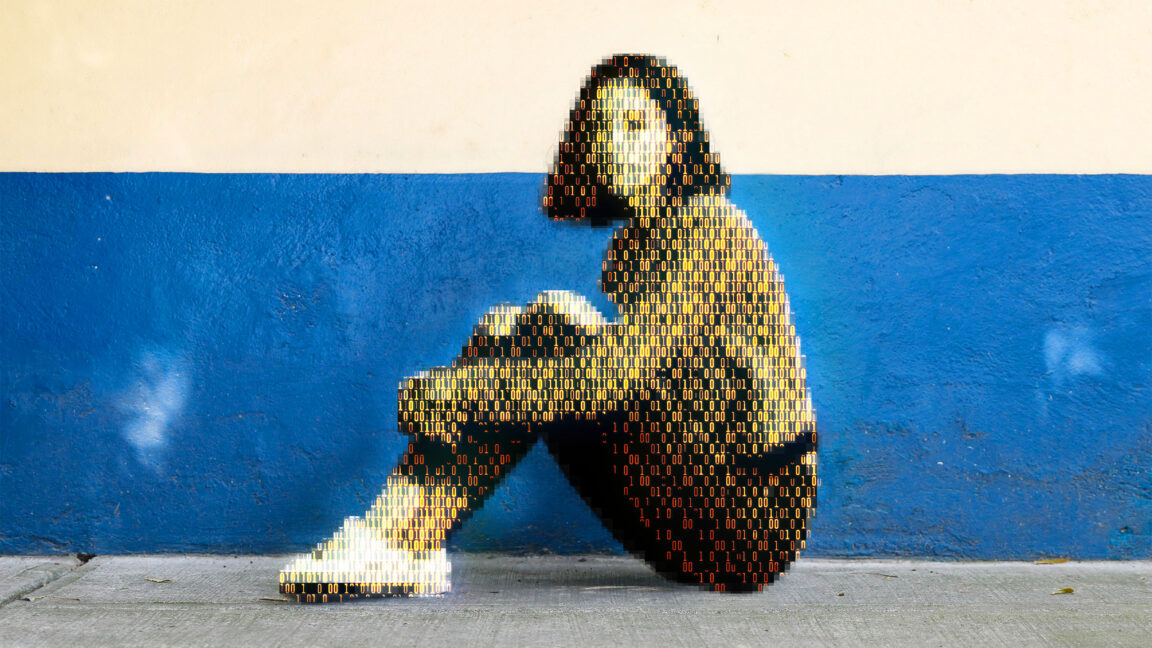

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

Australia has a more general ban on selling or exhibiting hard porn, but is is legal to possess it. So it’s not just small boobs.